The Humanoid Robots Lab at the University of Freiburg is using the Aldebaran Nao robot to do a variety of research, from climbing stairs, to imitating human motions, to footstep planning. One of their Naos, nicknamed "Osiris", has a special modification: a Hokuyo laser rangefinder head. This modification enables their research on localization for humanoid robots in complex environments.

Localization on humanoid robots is much more difficult due to the shaking motion of the robot while moving. Using techniques that will be outlined in an upcoming IROS paper [1], they are able to do 6D localization of the Nao's torso based on laser, odometry, IMU, and proprioception data. In the video above, you can see Osiris localizing itself while walking and climbing stairs.

The researchers at Uni Freiburg have been long-time contributors to ROS and run their own alufr-ros-pkg open source repository, which contains libraries for articulation models, 3d occupancy grids (OctoMap), and a Nao stack that builds on Brown's Nao driver to provide additional ROS integration.

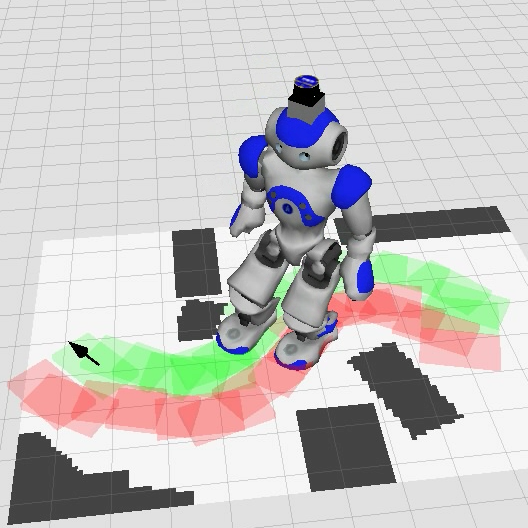

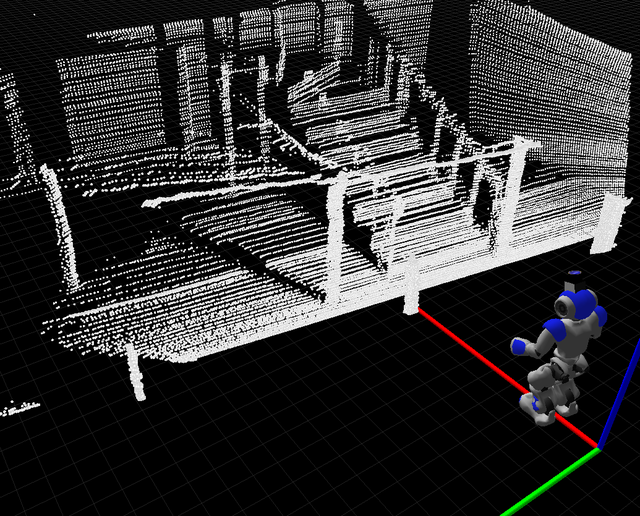

Uni Freiburg hopes to build on their research with humanoids to work towards a full navigation stack for humanoids. This will include a footstep planning library, which they will be releasing in alufr-ros-pkg soon. Below are some screenshots of their 3D scans and footstep plans in rviz.

[1] "Humanoid Robot Localization in Complex Indoor Environments" by Armin Hornung, Kai M. Wurm, and Maren Bennewitz (to be presented at IROS 2010).

Previously: Robots Using ROS: Aldebaran Nao

Leave a comment