We're pleased to announce that registration is now open for ROS Kong 2014, an international ROS users group meeting, to be held on June 6th at Hong Kong University, immediately following ICRA:

https://events.osrfoundation.org/ros-kong-2014/

This one-day event, our first official ROS meeting in Asia, will complement ROSCon 2014, which will happen later this year (stay tuned for updates on that event).

Register for ROS Kong 2014 today: https://events.osrfoundation.org/ros-kong-2014/#registration Early registration ends April 30, 2014.

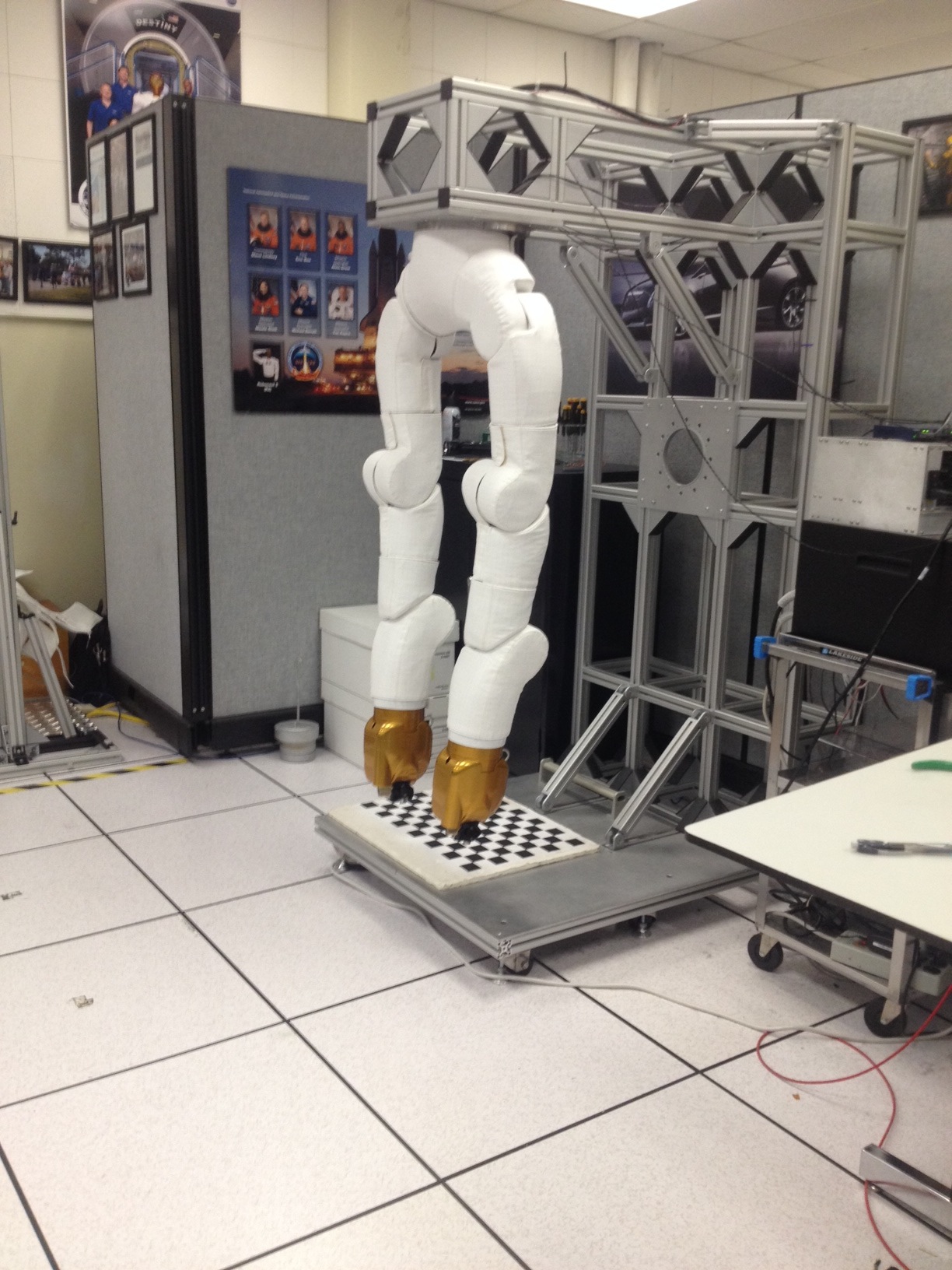

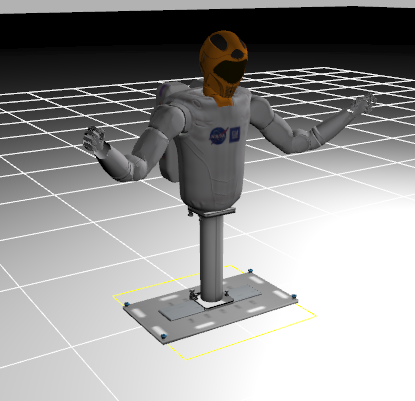

ROS Kong 2014 will feature: * Invited speakers: Learn about the latest improvements to and applications of ROS software from some of the luminaries in our community. * Lightning talks: One of our most popular events, lightning talks are back-to-back 3-minute presentations that are scheduled on-site. Bring your project pitch and share it with the world! * Birds-of-a-Feather (BoF) meetings: Get together with folks who share your specific interest, whether it's ROS in embedded systems, ROS in space, ROS for products, or anything else that will draw a crowd.

To keep us all together, coffee breaks and lunch will be catered on-site. There will also be a hosted reception (with food and drink) at a classic Hong Kong venue at the end of the day. Throughout the day, there will be lots of time to meet other ROS users both from Asia and around the world.

If you have any questions or are interested in sponsoring the event please contact us at roskong-2014-oc@osrfoundation.org.

Sincerely, Your ROS Kong 2014 Organizing Committee Tully Foote, Brian Gerkey, Wyatt Newman, Daniel Stonier