We hope you all enjoyed the IR image access. We'll have several Kinect-related announcements this week. Stay tuned!

November 2010 Archives

Robotics Engineering Excellence (re2, Inc.) is a research and development company that focuses on advanced mobile manipulation, including self-contained manipulators and payloads for mobile robot platforms. As a spin-out of Carnegie Mellon, they've developed plug-n-play modular manipulation technologies, a JAUS SDK, and unmanned ground vehicles (UGV). They focus on the defense industry and their clients include DARPA, the US Armed Forces (Army, Navy, Air Force), Robotics Technology Consortium, and TSWG. RE2 has recently adopted ROS as a platform to architect and organize code.

Robotics Engineering Excellence (re2, Inc.) is a research and development company that focuses on advanced mobile manipulation, including self-contained manipulators and payloads for mobile robot platforms. As a spin-out of Carnegie Mellon, they've developed plug-n-play modular manipulation technologies, a JAUS SDK, and unmanned ground vehicles (UGV). They focus on the defense industry and their clients include DARPA, the US Armed Forces (Army, Navy, Air Force), Robotics Technology Consortium, and TSWG. RE2 has recently adopted ROS as a platform to architect and organize code.

RE2 has several projects using ROS, including interchangeable end-effectors and force/tactile feedback for manipulators. Their Small Robot Toolkit (SRT) is a plug-n-play robot arm with interchangeable end-effector tools, which can be used as a manipulator payload for mobile platforms. RE2 has also developed the capability to automatically change out end-effectors, which is being used with a modular recon manipulator for vehicle-borne IEDs. Bomb technicians can switch between various tools, like drills, saws, and scope cameras, to inspect vehicles remotely. RE2 is also working on a force and tactile sensing manipulator, which provides haptic feedback for an operator. This sort of feedback makes it easier to perform tasks like inserting a key into a lock, or controlling a drill.

RE2's manipulation technologies are also being used on mobile platforms. They are developing a Robotic Nursing Assistant (RNA) to help nurses with difficult tasks, such as helping a patient sit up, and transferring a patient to a gurney. The RNA uses a mobile hospital platform with dexterous manipulators to create a capable tool for nurses to use. RE2 is also working on an autonomous robotic door opening kit for unmanned ground vehicles.

RE2's expertise in manipulation made them a natural choice to be the systems integrator for the software track of the DARPA ARM program. The goal of this track is to autonomously grasp and manipulate known objects using a common hardware platform. Participants will have to complete various challenges with this platform, like writing with a pen, sorting objects on a table, opening a gym bag, inserting a key in a lock, throwing a ball, using duct tape, and opening a jar. There will also be an outreach track that will provide web-based access. This will enable a community of students, hobbyists, and corporate teams to test their own skills at these challenges.

RE2 had it own set of challenges: build a robust and capable hardware and software platform for these participants to use. The ARM robot is a two-arm manipulator with sensor head. The hardware, valued at around half a million dollars, includes:

- Manipulation

- Two Barrett WAM arms (7-DOF with force-torque sensors)

- Two Barrett Hands (three-finger, tactile sensors on tips and palm)

- Sensor head

- Swiss Ranger 4000 (176x144 at 54fps)

- Bumblebee 2 (648x488 at 48fps)

- Color camera (5MP, 45 deg FOV)

- Stereo microphones (44kHz, 16-bit)

- Pan-tilt neck (4-DOF, dual pan-tilt)

A future version of the robot will incorporate a mobile base.

The software platform on the ARM robot is built on top of ROS. ROS was selected by RE2 for its modularity and tools. The modularity was important as the DARPA ARM project features an outreach program that will be providing a simulator. Users can switch between using the simulated and real robot with no changes to their code. The ARM platform also takes advantage of core ROS tools like rostest for testing and rosbag for data logging.

ROS has already proven itself on the similar CMU HERB robot, which has two Barrett arms and a mobile base. The various participants, including those in the outreach track, will be able to take advantage of the many ROS libraries for perception, grasping, and manipulation. This includes open-source frameworks like OpenRAVE, which was used on HERB for grasping and manipulation tasks.

Announcement from Gonçalo Cabrita of ISR - University of Coimbra

Hi everyone!

The ISR-UC ROS repository has just been updated! Everything is now well organized and in place. This includes projects like:

- The WifiComm multi-robot communication package;

- The CerealPort C++ serial port class;

- Our Roomba stacks with a bunch of new stuff;

- PlumeSim, the plume simulator;

- Scout driver for ROS (Scouts used to be quite popular).

In the following days we'll be creating wiki pages for all the new stuff including some tutorials.

We hope our contribution can be of some help to the ROS community.

Gonçalo Cabrita

ISR - University of Coimbra

Portugal

Sixty-five students spent the first week of November at the very first CoTeSys-ROS School on "Cognition-enabled Mobile Manipulation". These students focused on the challenges of personal robotics, like manipulating items in human environments. These students attended many lectures and also got hands-on experience with the PR2 robot and TUM-Rosie. They learned everything from basic ROS concepts, to navigation, perception, planning and grasping, knowledge processing, and reasoning. By the end of the week, they were able to program a robot to navigate to table, perceive objects on it, infer missing items, fetch the missing items, and bring them to the table -- an impressive feat.

The feedback on this first event was very positive. "Perhaps the most valuable was meeting researchers and other Ph.D students working in research areas closely related to my own, but of course the talks and tutorials themselves were almost as valuable; it would have taken much longer to learn all these things on my own," said one participant.

The Fall School may have been limited to sixty-five students, but thanks to the organizers, you can watch videos of the lectures and download all the code materials. You can read more feedback on the event at the official report. Many thanks to the organizers in the IAS department at TUM and the many guest lecturers that made this first event a success.

The CoTeSys Fall School was jointly organized by IAS department at TUM (Technische Universität München) and Willow Garage

Announcement from Associate Professor Chad Jenkins at Brown

Hi ros-users,

Just a quick heads up that brown-ros-pkg now includes ardrone_brown, an AR.Drone driver that supports the front camera.

The following video is a test of ardrone_brown for visually following

an AR Tag, using ar_recog (ARToolkit) for tag recognition:

The tag following behavior is produced by the nolan package that is run with only gain modifications from our previous AR following video.

More to come.

-Chad

p.s. Dear Parrot, thanks for releasing Development License v2!

Announcement from Armin Hornung of Albert-Ludwigs-Universität

The OctoMap 3D mapping library is now available as precompiled .deb package for Ubuntu in the ROS cturtle and unstable repositories. The octomap_mapping stack contains the actual octomap package for ROS and a map server. Detailed API documentation is available at the wiki page under "Code API".

This coincides with the release of OctoMap v0.8, which the

octomap_mapping stack now builds on. Compared to previous versions, the

optimizations in OctoMap 0.8 significantly speed up map building and

queries on the map. As always, we're curious to hear how you use OctoMap

and if there are suggestions for further improvements.

Best regards,

Armin Hornung and Kai M. Wurm

Announcement from Geoff Biggs of AIST on creating a standalone release of PCL

Hi all,

For all of us who plan to spend Thanksgiving working (perhaps we enjoy coding more than turkey, or it could just be that we don't live in the US...), there's a brand new release of PCL. This brings it up to 0.5. (I'm reliably informed that this makes it "half-way decent.")

In conjunction with the 0.5 release, we have just put the finishing touches on a standalone distribution of PCL. This means that those of you who are not using ROS can now use PCL in your applications.

It's still somewhat unrefined, and we need to work with some of the dependencies to make installation easier. Look for many improvements as we head towards 1.0!

Thanks again to all the contributors!

Geoff

Just in the time for Thanksgiving, we've got some new things for the ROS and ROS-Kinect community to play with.

- Access to Kinect's raw IR image

- Kinect debians for C Turtle

- ROS debians for Ubuntu Maverick on C Turtle

For the Kinect, we've achieved a major breakthrough: access to the IR camera image. Now that we have access to the raw IR camera image, instead of just the depth image, we can do monocular and stereo calibration using the regular ROS calibration tools and methods. We're taking a short break for Thanksgiving, but we should have RGB/depth calibration by the end of the week.

Regularly ROS-Kinect users can just update to the get these changes -- or you can try

sudo apt-get update

sudo apt-get install ros-cturtle-kinect

We have a lot more in the works, so stay tuned. Happy Thanksgiving!

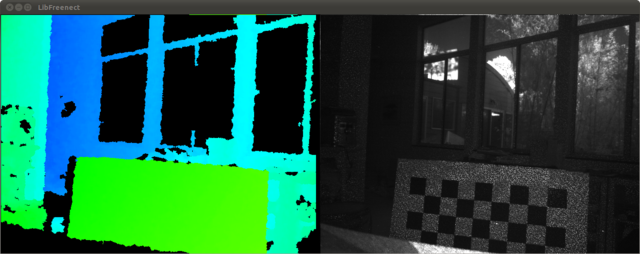

The ROS/Kinect integration continues to progress quickly thanks to the efforts of the OpenKinect and ROS community. The initial ROS+Kinect contributors -- Alex Trevor, Ivan Dryanovski, Stéphane Magnenat, and William Morris -- have combined their efforts into a new ROS kinect stack. At Willow Garage, our engineers and researchers have also been working on the stack to improve the driver and integrate it with ROS libraries and tools. The community is now hard at work on solving problems like calibration, which will be important for using the Kinect in robotics. Feel free to sign up for the ros-kinect mailing list to keep up-to-date on the latest efforts.

We thought we'd make a quick video to show some of what's going on at Willow Garage with the Kinect. We've added features like multi-camera support and control of the Kinect motors to the popular libfreenect library. We're also working on making some fun Kinect hacks of our own -- watch until the end of the video to see where we are with those. We look forward to seeing your videos as well.

Skybotix put out a video of their CoaX helicopter running with ROS teloperation:

You can read more at I Heart Robotics.

Roland Philippsen sent in a bunch of photos of the ROS flag flying proudly with many of the ETH Zurich Autonomous Systems Lab robots running ROS, which we covered back in October.

Pictured: Markus Achtelik, Andreas Breitenmoser, Gregory Hitz, Ming Liu, Roland Philippsen.

Additional credits: Cedric Pradalier, Ralf Kaestner, Stephane Magnenat, Prof. Roland Siegwart.

Robot links: Magnebike, Limnobotics, sFly.

Hector Martin's libfreenect open-source driver for the Microsoft Kinect has lead to several efforts within the ROS community to create Kinect drivers for ROS. Stéphane Magnenat (ETH Zurich) and Alex Trevor were the to port libfreenect to ROS. The CCNY Robotics Lab has now added their kinect_node package, which adds documentation, depth calibration, and example bag files. It's great to see how their efforts have contributed to each other, as well as to the broader libfreenect community.

The Kinect is obviously an important sensor for robotics. It's a sensor that we can all own at home, instead of having to share in a lab. That has already has enabled so many people to quickly work together on an open-source driver and it will be great to see what the community can build together next.

TheCorpora has put out their own Linux distribution, based on Ubuntu 10.04, that neatly packages everything you need to get up and running with Qbo. It comes with ROS, OpenCV, Festival speech synthesis, Julius speech recognition, Qbo-specific libraries, and more. This includes capabilities, like Qbo_Visual_Slam, shown below:

It's hard to believe, but it has now been three years since we set out to create an open source software platform for the robotics industry. That effort has come to be known as ROS, which initially began as a collaboration between the STAIR project at Stanford and the Personal Robots Program at Willow Garage. Just a few short years later, we're excited to see how many individuals and institutions have joined in this collaboration. ROS (for Robot Operating System) is completely open source (BSD) and is now in use around the world in North America, Europe, Asia and Australia. There are robots running on ROS indoors and out, above and below the sea, and even flying overhead.

As we celebrate this occasion, we thought it would be a good time to share the "State of ROS" and talk about what's next.

Stats

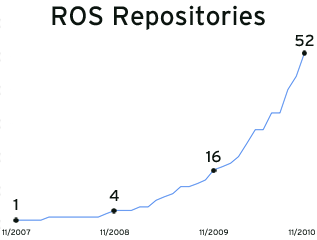

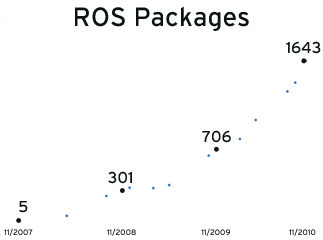

There are now over 50 public ROS repositories contributing open-source libraries and tools for ROS, and more than 1600 public ROS packages. We are aware of over 50 robots using ROS, including mobile manipulators, quadrotors, cars, boats, space rovers, hobby platforms, and more.

People often ask how many users are there of ROS. Due to the free nature of ROS, we simply don't know. What we do know is that since the ROS C Turtle release this past August, there have been over 15,000 unique visitors to the ROS C Turtle installation instructions, with over 6,000 unique visitors in October.

ROS has grown very quickly this past year. Below are a few charts showing the growth in the number of public ROS repositories and ROS packages. As large as the ROS community is, you can see that things are just getting started.

Universities Using ROS

Academic contributors are the backbone of the ROS community, providing nearly three-fourths of the public ROS repositories. These contributions are helping to push the bleeding edge of ROS capabilities, and are also expanding ROS to new robot platforms. In the process, they are creating new communities within ROS to collaborate at the hardware, software, and research levels.

These individuals and departments have greatly expanded the range of hardware that can be used with ROS. Thanks to their efforts, you can now use ROS with iRobot Creates (Brown University), Aldebaran Naos (Brown, Uni Freiburg), AscTec quadrotors (CCNY), Barrett arms (MIT), Velodynes (UT Austin/ART), Pioneers (USC, AMOR), Parrot AR.Drones (SIUE), and much, much more.

Several core ROS libraries are being built with the help of academic institutions: the ROS motion planning library is being built with contributions from the Kavraki Lab at Rice University, the CLMC Lab at USC, GRASP Lab at Penn, and Willow Garage; the Point Cloud Library is being built with contributions from researchers at TUM, Uni Freiburg, Willow Garage, and MIT; and the visual SLAM library is being built in collaboration with Uni Freiburg and Imperial College of London. There are also individual contributions: Jack O'Quin at UT Austin provided an official Firewire camera driver and René Ladan has done a FreeBSD port of ROS.

At the research level, the contributions are too broad to easily summarize. Whether you're doing research in 3D perception, manipulation, cognitive robotics, mapping, motion planning, controls, grasping, SLAM, HRI, or object recognition, there are ROS packages representing current research. We look forward to a world where "academic publication" refers to code as much as it does papers, and, thanks to the ROS community, we are starting to see that happen. We are also building tools to help researchers cite ROS code used in their publications.

Companies Using ROS

ROS has been adopted by robot hardware manufacturers, commercial research labs, and software companies. These institutions include Meka, Fraunhofer IPA, Shadow Robot, Yujin Robot, Thecorpora, Robotics Equipment Corporation (REC), Skybotix, Kuka (youBot), and Vanadium Labs. All are using ROS as a software platform for their customers to build on. Additionally, several of these companies are using ROS to build their own robot applications. ROS is also being used in research and development at Bosch, re2, Aptima, and Southwest Research Institute.

What's even more exciting than seeing companies using ROS is organizations contributing open source software to the community. There are ROS repositories for Bosch, Shadow Robot, Aptima, Fraunhofer IPA, Robotino (maintained by REC) and Vanadium Labs. Meka and Skybotix are both providing open-source drivers for their hardware, and Thecorpora's Qbo is billed as an "open-source robot." More broadly, Yujin Robot has released their embedded tools for ROS and REC has ported ROS to Windows.

Companies developing software libraries for robotics have also been supportive of open source and ROS. Gostai completed the transition of the Urbi SDK to open source this year, and the 2.1 release added support for ROS. SRI released components from the Karto SLAM SDK as open source on code.ros.org and is supporting ROS integration.

Programs Using ROS

Various research programs are embracing ROS as a platform. ROS was created, in part, to support the PR2 Beta Program and encourage the exchange of ideas through software. This year, in addition to the official start of the PR2 Beta Program, there have been two DARPA programs announced that are using ROS: Maximum Mobility and Manipulation (M3) and ARM-S. The ARM-S program is providing a shared manipulation platform with ROS drivers that will enable participants to work with the ROS community.

And in Europe, there is the BRICS Project, which aims to identify and promote good development practice and reusable components for robotics. The BRICS participants are making use of many ROS packages, and working to integrate them with other robot software systems.

Building Bridges

One of the values of being open source is that it's much easier to collaborate than compete. With so much great open source software out there, it's wonderful that various robot software frameworks can build on each other's strengths rather than forcing users to choose between them. This year we've seen ROS integrated with OpenRTM, Urbi, and PIXHAWK. There is also improved integration with Orocos.

ROS has also been integrated with other programming languages thanks to members of the community. Tim Niemueller has contributed a Lua client library for ROS, which also helps provide integration with the Fawkes Framework. Brown University has contributed a Javascript library for ROS that lets you control ROS-based robots directly from a Web browser.

Hobby and Low-Cost Platforms

ROS now runs on many lower-cost, hobby-friendly platforms. 2010 started off with Andrew Harris providing ROS libraries for the Arduino and was quickly followed by I Heart Robotics's WowWee Rovio drivers. You can now use Lego NXT robots with ROS as well as Taylor Veltrop's drivers for Roboard-equipped humanoids. Companies have also contributed: Vanadium Labs provided ROS drivers for their ArbotiX line of robocontrollers.

The ROS iRobot Create/Roomba community has also expanded greatly this year, with many institutions and individuals now providing drivers and libraries: Brown's RLAB, CU Boulder's Correll Lab, Aptima, Stanford, OTL, and ISR - University of Coimbra.

Making ROS More Open

We strive to make ROS as open as possible. From ROS's early days hosted on SourceForge, to a community-editable wiki, to open code reviews, we've done our best to perform ROS development out in full view of the public. However, we recognize that we can do more and are pursuing two major efforts to do so.

First, we created a new process for proposing changes to ROS. This process, called ROS Enhancement Proposals (REPS), empowers you to contribute to ROS development. It also provides better insight into current efforts.

Our second effort lays the groundwork for a potential ROS Foundation, an organization for the long-term development of ROS. We are inspired by the Mozilla Foundation, Apache Software Foundation, and GNOME Foundation, which act as stewards for public technologies. These foundations were not created overnight, nor were they created alone. We have already received invaluable advice from our friends at Mozilla on how to get started; now we need your help.

We invite you to get involved -- how can you play a role? We need developers for the core libraries, researchers to push the envelope, and companies to bring it together. As a community, these are all things we already have and are already doing. All we need to do is take the next step together.

This third year for ROS has shown us the size and strength of the ROS community. As our community continues to grow, we hope that we can better combine our strengths to meet the challenge of creating an open platform for robotics.

Concluding Thoughts

Just a few short years ago we set out to create an open software platform that lets roboticists focus on innovation, rather than reinventing the wheel; an open source robot operating system that is free for others to use, change and commercialize upon.

Three years later we are really excited by our community and what it has done. Whether you're talking about robots, libraries, companies, or research labs, the growth and breadth that we have seen has been stunning. We are grateful for your participation and have done our best to respond to your needs by making ROS better and more open. We're looking forward to the next three years (and many years after that), working together to build what's next.

Cody, from Georgia Tech's Healthcare Robotics Lab, is now able to give patients sponge baths. We've previously featured Cody's drawer-opening skills as part of our "Robots Using ROS Series." This work is by Chih-Hung King, Tiffany Chen, Advait Jain, and Charlie Kemp.

They've previously explored using force-feedback teleoperation interfaces to do the same task. This time around, they are taking advantage of Cody's tilting laser-range finder (specs for building your own) and a camera to perform the task autonomously.

In this latest C Turtle update, we are now including two stacks from utexas-art-ros-pkg:

In this latest C Turtle update, we are now including two stacks from utexas-art-ros-pkg: art_vehicle and velodyne.

- art_vehicle 0.3.1

- velodyne 0.2.0

Other updates:

- camera_drivers 1.2.5

- driver_common 1.2.2

- joystick_drivers 1.2.1

- motion_planning_common 0.2.5

- pr2_ethercat_drivers 1.2.4

- ros_realtime 0.4.2

Find this blog and more at planet.ros.org.